Sorry, my mistake.flok wrote: ↑Wed Jun 26, 2019 7:34 pmAre you sure? Because this is what the wiki says about it:Robert Pope wrote: ↑Wed Jun 26, 2019 7:18 pm It looks to me like you have a scale mismatch:

value_from_fen is in [0,1]

calculateSigmoid(eval_score) is in [-1,1].

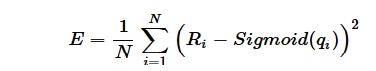

"Ri is the result of the game corresponding to position i; 0 for black win, 0.5 for draw and 1 for white win."

(https://www.chessprogramming.org/Texel% ... ing_Method)

tuning for the uninformed

Moderators: hgm, Rebel, chrisw

-

Robert Pope

- Posts: 558

- Joined: Sat Mar 25, 2006 8:27 pm

Re: tuning for the uninformed

-

Ferdy

- Posts: 4833

- Joined: Sun Aug 10, 2008 3:15 pm

- Location: Philippines

Re: tuning for the uninformed

* Use score cp wpov in sigmoid

* Result, 1: white wins, 0: black wins, 0.5: even

Given a bad position for black and black to move and result is 1 or white wins.

Try this code for data tests.

* Result, 1: white wins, 0: black wins, 0.5: even

Given a bad position for black and black to move and result is 1 or white wins.

Code: Select all

Input score cp side pov? -1000

Input side to move, [w or b]? b

Input result [1: white_wins, 0: black_wins, 0.5: even]? 1

Input 1 to exit data entry? 1

score cp wpov: 1000

res: 1.0

sig: 0.92414

error = res - sig = 1.0 - 0.92414 = 0.07586

error squared: 0.00575

E = 0.00575Code: Select all

#!/usr/bin/env python3

"""

Return mean of sum of error squared

"""

import math

def sigmoid(cp):

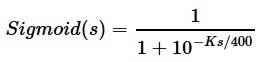

""" Returns scoring rate based on centipawn eval (wpov) of positon

cp:

centipawn score

"""

K = 1.0

return 1 / (1 + math.exp(-K*cp/400))

def E(pos_score, result):

""" Returns mean of sum of error squared

pos_score:

A list with cp score in white pov

result:

A list of either 1, 0, 0.5

1: white wins, 0: black wins, 0.5: even

"""

sum_error_squared = 0

# Use python 3

for cp, res in zip(pos_score, result):

print('score cp wpov: {}'.format(cp))

print('res: {:0.1f}'.format(res))

sig = sigmoid(cp)

print('sig: {:0.5f}'.format(sig))

error = res - sig

print('error = res - sig = {:0.1f} - {:0.5f} = {:0.5f}'.format(res, sig, error))

error_squared = error * error

print('error squared: {:0.5f}'.format(error_squared))

sum_error_squared += error_squared

num_pos = len(result)

return sum_error_squared/num_pos

def main():

pos_score = []

result = []

# User input of pos score and result

while True:

score_cp_side_pov = int(input('Input score cp side pov? '))

stm = input('Input side to move, [w or b]? ')

# Convert score side pov to white pov

if stm == 'b':

score_cp_white_pov = -1 * score_cp_side_pov

else:

score_cp_white_pov = score_cp_side_pov

pos_score.append(score_cp_white_pov)

res = float(input('Input result [1: white_wins, 0: black_wins, 0.5: even]? '))

result.append(res)

try:

e = int(input('Input 1 to exit data entry? '))

if e == 1:

break

except:

pass

value = E(pos_score, result)

print('E = {:0.5f}'.format(value))

if __name__ == '__main__':

main()

-

flok

- Posts: 481

- Joined: Tue Jul 03, 2018 10:19 am

- Full name: Folkert van Heusden

Re: tuning for the uninformed

Ok found that at least the way I determine K was (is?) incorrect.

Now implemented https://github.com/flok99/Micah/blob/ma ... h.cpp#L177 <= that binary search for it.

Will now try - that'll keep at least a day.

Now implemented https://github.com/flok99/Micah/blob/ma ... h.cpp#L177 <= that binary search for it.

Will now try - that'll keep at least a day.

-

Ratosh

- Posts: 77

- Joined: Mon Apr 16, 2018 6:56 pm

Re: tuning for the uninformed

I saw 2 issues in Micah.cpp:

Code: Select all

double K = calculate_error(&work, &results, positions, 1.0);

double min_err = 1000.0;

- Juse use a constant K for now, i'm using 1.4 on Pirarucu.

- Set your min_err to the error from your current used variables.

Code: Select all

double K = 1.4;

double min_err = calculate_error(&work, &results, positions, K);

-

flok

- Posts: 481

- Joined: Tue Jul 03, 2018 10:19 am

- Full name: Folkert van Heusden

Re: tuning for the uninformed

Ratosh wrote: ↑Thu Jun 27, 2019 7:29 pm I saw 2 issues in Micah.cpp:Code: Select all

double K = calculate_error(&work, &results, positions, 1.0); double min_err = 1000.0;Yes that's indeed the case!

- Juse use a constant K for now, i'm using 1.4 on Pirarucu.

- Set your min_err to the error from your current used variables.

Only the 1.4 value I cannot reproduce: I've now implemented a binary search for finding the K value and I always up with -0.002557039260864258

Please note that I merged the tuning code into master.

-

Robert Pope

- Posts: 558

- Joined: Sat Mar 25, 2006 8:27 pm

Re: tuning for the uninformed

K has to be positive or sigmoid goes the wrong direction, so that's a critical problem.

I would suggest picking a dozen positions and checking your calculation to get K. You should be able to do that by hand and see where it is going wrong.

I would suggest picking a dozen positions and checking your calculation to get K. You should be able to do that by hand and see where it is going wrong.

-

Ronald

- Posts: 160

- Joined: Tue Jan 23, 2018 10:18 am

- Location: Rotterdam

- Full name: Ronald Friederich

Re: tuning for the uninformed

Hi Folkert,

The sigmoid function is used to map the score of the evaluation (which is f.i. in the range of -100 to +100) to a value in the range of 0 to 1.

A score of -infinity results in a value of 0, a score of +infinity in a value of 1. A score of 0 results in a value of the sigmoid of 0.5.

The constant K defines the "sensitivity" of the sigmoid, ie how fast it goes to the border values.

You should map the outcome of the positions in your learningset absolute not relative to the side to move, which is: value 0 is a win for black, 1 is win for white and 0.5 is a draw. The file "quiet-labeled.epd" is also absolute (1-0 win for white, 0-1 win for black). If I read the source correctly, you are also doing that.

So you need an absolute score for the position in order to calculate the error for the position. In error_calc_thread the score of the position is calculated via qs(). I suppose the result of qs() is calculated relative to the side to move. If that is the case I think you should invert the calculated score of qs in case the side to move is black.

The sigmoid function is used to map the score of the evaluation (which is f.i. in the range of -100 to +100) to a value in the range of 0 to 1.

A score of -infinity results in a value of 0, a score of +infinity in a value of 1. A score of 0 results in a value of the sigmoid of 0.5.

The constant K defines the "sensitivity" of the sigmoid, ie how fast it goes to the border values.

You should map the outcome of the positions in your learningset absolute not relative to the side to move, which is: value 0 is a win for black, 1 is win for white and 0.5 is a draw. The file "quiet-labeled.epd" is also absolute (1-0 win for white, 0-1 win for black). If I read the source correctly, you are also doing that.

So you need an absolute score for the position in order to calculate the error for the position. In error_calc_thread the score of the position is calculated via qs(). I suppose the result of qs() is calculated relative to the side to move. If that is the case I think you should invert the calculated score of qs in case the side to move is black.

-

flok

- Posts: 481

- Joined: Tue Jul 03, 2018 10:19 am

- Full name: Folkert van Heusden

Re: tuning for the uninformed

Ah! If I do that, then suddenly the K-value makes much more sense: 1.516882Ronald wrote: ↑Thu Jun 27, 2019 9:21 pm So you need an absolute score for the position in order to calculate the error for the position. In error_calc_thread the score of the position is calculated via qs(). I suppose the result of qs() is calculated relative to the side to move. If that is the case I think you should invert the calculated score of qs in case the side to move is black.

error is then 25,8%

Thanks a lot!!

-

Sven

- Posts: 4052

- Joined: Thu May 15, 2008 9:57 pm

- Location: Berlin, Germany

- Full name: Sven Schüle

Re: tuning for the uninformed

No, why? I said "same viewpoint" and if the stored value is from white POV then eval must be white POV, too. My example was more general and did not assume white POV, it was only made to show what would happen on a viewpoint mismatch.

Sven Schüle (engine author: Jumbo, KnockOut, Surprise)

-

flok

- Posts: 481

- Joined: Tue Jul 03, 2018 10:19 am

- Full name: Folkert van Heusden

Re: tuning for the uninformed

So instead I could also do (score + 10000) / 20000 ? (assuming eval score range is -10k ... 10k)Ronald wrote: ↑Thu Jun 27, 2019 9:21 pm Hi Folkert,

The sigmoid function is used to map the score of the evaluation (which is f.i. in the range of -100 to +100) to a value in the range of 0 to 1.

A score of -infinity results in a value of 0, a score of +infinity in a value of 1. A score of 0 results in a value of the sigmoid of 0.5.