by Werewolf » Tue Jun 05, 2018 11:10 am

The issue is whether scaling of MCTS has inherent properties - like alpha beta did for ages before Lazy - where we can predict with confidence what the efficiency will be on N threads and how hard it gets as N rises. If it turns out that at 12 it begins to drop - and that is inherent with this method (rather than a by-product of a new idea the Komodo team are trying) - it makes a huge difference.

I'm interested in knowing if long-term we can do better than N^ 0.8 etc.

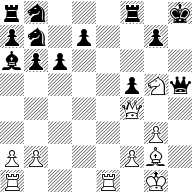

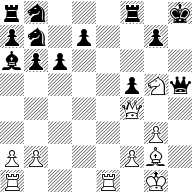

Without any theory, I'd say it is some random walk. Monte Carlo with 12 threads is the same as one thread searching twelve times longer? It probably scales well to at least 64 threads because I believe that was what Alpha Zero was using. Duplicate games with almost random moves will be rare in chess. But Stockfish, without a book, on 64 threads Lazy Eval was still very predictable in the opening. I am still suspicious of positions like

[Event "?"]

[Site "?"]

[Date "2018.06.05"]

[Round "?"]

[White "?"]

[Black "?"]

[Result "*"]

[SetUp "1"]

[FEN "rn3r1k/pn1p1ppq/bpp4p/7P/4N1Q1/6P1/PP3PB1/R1B1R1K1 w - -"]

1. Bg5 f5 2. Qf4 hxg5 3. Nxg5 Qxh5 {4. g4!}*

rn3r1k/pn1p2p1/bpp5/5pNq/5Q2/6P1/PP3PB1/R3R1K1 w - -

(Kaissa still needs about depth 27 in the diagram position to find 4. g4 or 4. Bf3. And that is with Multi PV = 15

)

Engine: Kaissa III (512 MB)

by T. Romstad, M. Costalba, J. Kiiski, G. Linscott

33 201:24 +1.80 4.g4 Qg6 (28.957.594.395) 2396

33 201:24 +1.70 4.Bf3 Qg6 5.Kg2 Kg8 6.Rad1 Nc5 7.Rd6 (28.957.594.395) 2396

33 201:24 +0.13 4.Rad1 Kg8 5.Bf3 Qh6 6.b4 Bb5 7.Kg2 Nd8

8.Rd6 Ne6 9.Rdxe6 dxe6 10.Rxe6 g6

11.Qd6 Kg7 (28.957.594.395) 2396

33 201:24 0.00 4.Re7 Nc5 5.Bf3 Qg6 6.Kg2 Kg8 7.Rh1 Ne6

8.Qh4 Qh6 9.Qxh6 gxh6 10.Nxe6 dxe6

11.Rxh6 Bc4 12.b3 Bd5 13.Bxd5 exd5

14.Rg6+ Kh8 (28.957.594.395) 2396

33 201:24 -1.38 4.Rac1 Kg8 5.Bf3 Qh6 6.Kg2 Nd8 7.Re5 d6

8.Rxf5 Nd7 9.Rxf8+ Nxf8 10.Bxc6 Nfe6

11.Nxe6 Qxf4 12.gxf4 Nxc6 13.Nc7 Bb7

14.Nxa8 Bxa8 15.Kh3 Kf7 16.Kg3 Bb7

17.Kg4 (28.957.594.395) 2396

33 201:24 -1.52 4.Re5 Kg8 5.Bf3 Qg6 6.Kg2 Nd8 7.Rh1 Ne6 (28.957.594.395) 2396

33 201:24 -1.59 4.b4 Nd8 5.Bf3 Qg6 6.Re7 Ne6 7.Nxe6 dxe6

8.Re1 Bc8 9.Qh4+ Kg8 10.b5 a5

11.bxc6 Na6 12.Rd1 Qh6 13.Qxh6 gxh6

14.a4 Nc5 15.c7 (28.957.594.395) 2396

33 201:24 -2.52 4.a4 Kg8 (28.957.594.395) 2396

33 201:24 -2.75 4.b3 Kg8 5.Bf3 Qh6 6.Kg2 Nc5 7.Rh1 Ne6

8.Nxe6 Qxe6 9.Qh4 Qh6 10.Qxh6 gxh6

11.Rxh6 Bd3 12.Rah1 Be4 13.Bxe4 fxe4

14.R1h4 (28.957.594.395) 2396

33 201:24 -2.75 4.Bh1 Kg8 5.Bf3 Qh6 6.Kg2 Nc5 7.Rh1 Ne6

8.Nxe6 Qxe6 9.Qh4 Qh6 10.Qxh6 gxh6

11.Rxh6 (28.957.594.395) 2396

33 201:24 -2.86 4.Rab1 Nc5 5.Bf3 Qg6 6.Kg2 Kg8 7.Rh1 Ne6

8.Nxe6 Qxe6 9.Qh4 Qh6 10.Qb4 Qf6

11.Rh5 d5 12.Rbh1 Kf7 13.Qf4 Ke8

14.Rh7 (28.957.594.395) 2396

33 201:24 -2.89 4.a3 Kg8 5.Bf3 Qh6 6.Rad1 (28.957.594.395) 2396

33 201:24 -3.00 4.Be4 Kg8 (28.957.594.395) 2396

32 201:24 -3.05 4.Red1 Qg4 5.Qe3 (28.957.594.395) 2396

32 201:24 -3.99 4.Re3 Qg4 5.Qxg4 fxg4 (28.957.594.395) 2396

best move: g3-g4 time: 201:24.344 min n/s: 2.396.292 nodes: 28.957.594.395

Was Alpha Zeto trained on this, probably not but you really have to search very Monte Carlo like to find this... Of course we have been spoilt with all the Late Move Reductions since Tord and Fabien, just to name two famous programmers, popularized it somewhere before Rybka 1.0. Fritz 10 probably is much better than Stockfish.